Speech recognition in Hangouts Meet

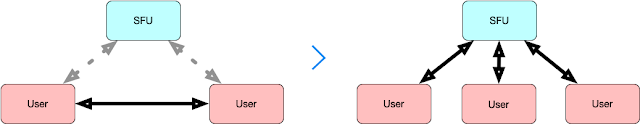

There are many possible applications for speech recognition in Real Time Communication services live captions, simultaneous translation, voice commands or storing/summarising audio conversations. Speech recognition in the form of live captioning has been available in Hangouts Meet for some months but recently it was promoted to a button in the main UI and I have started to use it almost every day. I'm mostly interested in the recognition technology and specifically on how to integrate DeepSpeech in RTC media servers to provide a cost effective solution but in this post I wanted to spend some time analysing how Hangouts Meet implemented captioning from a signalling point of view. At a very high level there are at least three possible architectures for speech recognition in RTC services: A) On device speech recognition : This is the cheapest option but not all the devices have support for it, the quality of the models is not as good as in the clou...